Documentation Index

Fetch the complete documentation index at: https://claworc.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

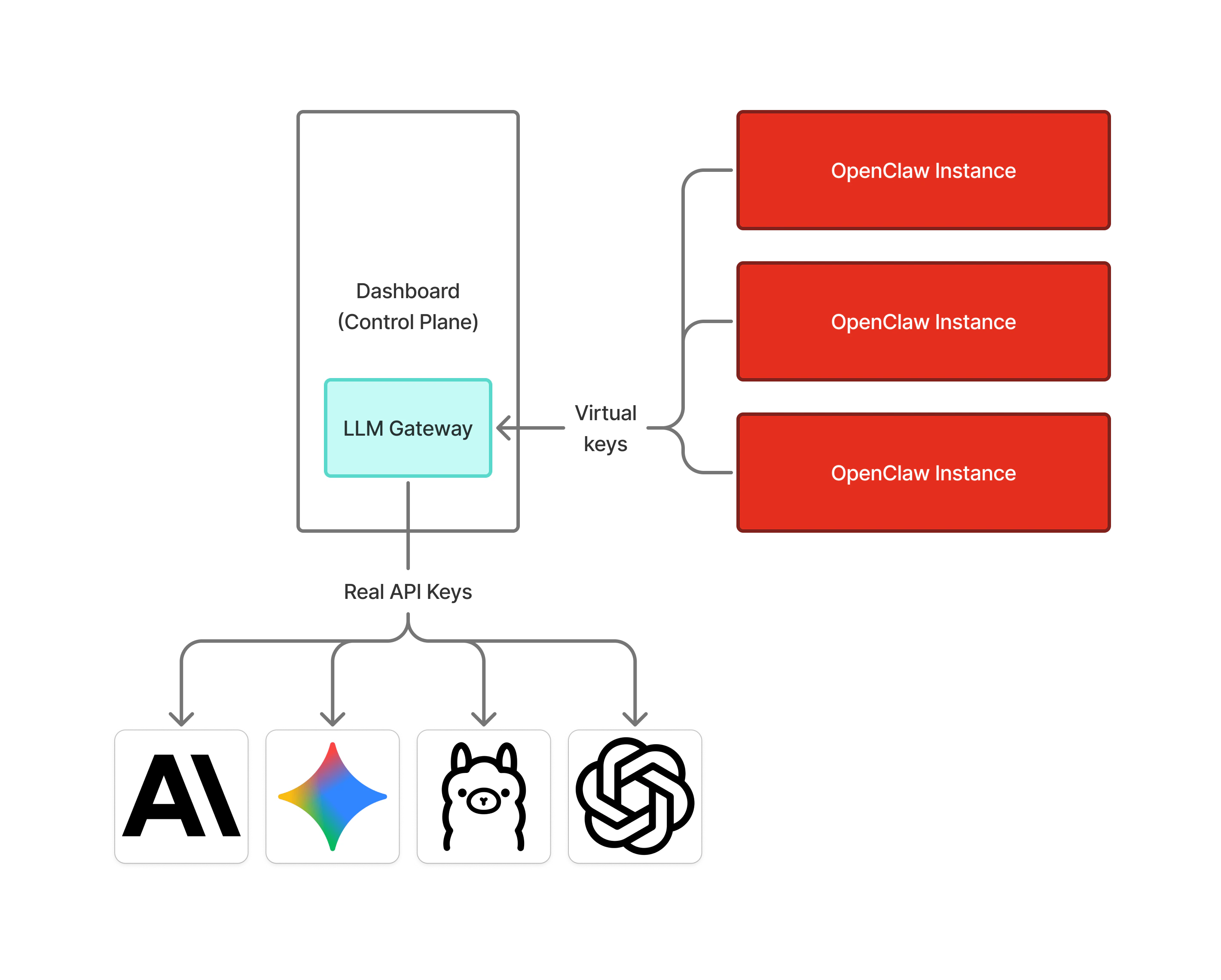

What is the LLM gateway?

Claworc includes a built-in LLM gateway — an internal proxy that sits between your agent instances and LLM provider APIs (Anthropic, OpenAI, Ollama, and more).

Security properties

- Credentials stay out of containers. Even if an agent container is compromised, there are no real API keys inside it to steal. A container breakout or log leak cannot expose your provider credentials.

- Encrypted at rest. All provider API keys — both global settings and per-instance overrides — are stored Fernet-encrypted in the database. The encryption key is auto-generated on first run and stored separately.

- Network isolation. The LLM gateway is never exposed on a public port. It is only reachable by OpenClaw instances.

- Per-instance revocation. Virtual keys are one-to-one: one key per instance per provider. You can remove a provider from one instance, or delete a provider entirely, without affecting any other instance or rotating your real provider key.

- Audit trail. Every request through the gateway is logged with timing, token counts, status codes, and error details.